American Society for Microbiology: ‘Has Modern Science Become Dysfunctional?’

March 29th, 2012

The recent explosion in the number of retractions in scientific journals is just the tip of the iceberg and a symptom of a greater dysfunction that has been evolving the world of biomedical research say the editors-in-chief of two prominent journals in a presentation before a committee of the National Academy of Sciences (NAS) today.

“Incentives have evolved over the decades to encourage some behaviors that are detrimental to good science,” says Ferric Fang, editor-in-chief of the journal Infection and Immunity, a publication of the American Society for Microbiology (ASM), who is speaking today at the meeting of the Committee of Science, Technology, and Law of the NAS along with Arturo Casadevall, editor-in-chief of mBio®, the ASM’s online, open-access journal.

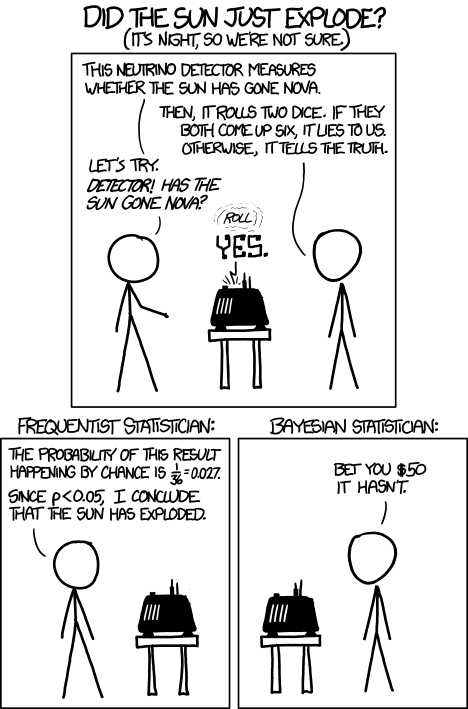

In the past decade the number of retraction notices for scientific journals has increased more than 10-fold while the number of journals articles published has only increased by 44%. While retractions still represent a very small percentage of the total, the increase is still disturbing because it undermines society’s confidence in scientific results and on public policy decisions that are based on those results, says Casadevall. Some of the retractions are due to simple error but many are a result of misconduct including falsification of data and plagiarism.

More concerning, say the editors, is that this trend may be a symptom of a growing dysfunction in the biomedical sciences, one that needs to be addressed soon. At the heart of the problem is an economic incentive system fueling a hypercompetitive environment that is fostering poor scientific practices, including frank misconduct.

Via: http://www.allgov.com/Controversies/Vie ... als_120418

Retraction Crisis Hits Scientific Journals

Wednesday, April 18, 2012

Three scientific journals have published articles over the past two years warning of the rise in retractions and misconduct by researchers who have fudged results.

The latest publication to do so was Infection and Immunity, which revealed it had been duped repeatedly by the same scientist, Naoki Mori of the University of the Ryukyus in Japan, who had also published questionable facts in other published papers.

A former editor of the publication, Dr. Arturo Casadevall, blamed “a winner-take-all game” in science today that has created “perverse incentives that lead scientists to cut corners and, in some cases, commit acts of misconduct,” according to The New York Times.

Another journal, Nature, reported last year a tenfold increase in retractions over the past decade even though the number of published papers only increased bo 44%. Before that, the Journal of Medical Ethics published a study in 2010 that said a rise in recent retractions was the fault of misconduct and “honest scientific mistakes.” It calculated that the number of retractions had more than tripled from 50 in 2005 to 180 in 2009.

Dr. Ferric Fang, the editor-in-chief of Infection and Immunity, pointed out that the increased competition for jobs may be a major contributing factor to the falsification problem. According to The New York Times, “In 1973, more than half of biologists had a tenure-track job within six years of getting a Ph.D. By 2006 the figure was down to 15 percent.”

Via: http://www.reuters.com/article/2012/03/ ... 2P20120328

In cancer science, many "discoveries" don't hold up

(Reuters) - A former researcher at Amgen Inc has found that many basic studies on cancer -- a high proportion of them from university labs -- are unreliable, with grim consequences for producing new medicines in the future.

During a decade as head of global cancer research at Amgen, C. Glenn Begley identified 53 "landmark" publications -- papers in top journals, from reputable labs -- for his team to reproduce. Begley sought to double-check the findings before trying to build on them for drug development.

Result: 47 of the 53 could not be replicated. He described his findings in a commentary piece published on Wednesday in the journal Nature.

"It was shocking," said Begley, now senior vice president of privately held biotechnology company TetraLogic, which develops cancer drugs. "These are the studies the pharmaceutical industry relies on to identify new targets for drug development. But if you're going to place a $1 million or $2 million or $5 million bet on an observation, you need to be sure it's true. As we tried to reproduce these papers we became convinced you can't take anything at face value."

The failure to win "the war on cancer" has been blamed on many factors, from the use of mouse models that are irrelevant to human cancers to risk-averse funding agencies. But recently a new culprit has emerged: too many basic scientific discoveries, done in animals or cells growing in lab dishes and meant to show the way to a new drug, are wrong.

Begley's experience echoes a report from scientists at Bayer AG last year. Neither group of researchers alleges fraud, nor would they identify the research they had tried to replicate.

But they and others fear the phenomenon is the product of a skewed system of incentives that has academics cutting corners to further their careers.

George Robertson of Dalhousie University in Nova Scotia previously worked at Merck on neurodegenerative diseases such as Parkinson's. While at Merck, he also found many academic studies that did not hold up.

"It drives people in industry crazy. Why are we seeing a collapse of the pharma and biotech industries? One possibility is that academia is not providing accurate findings," he said.

BELIEVE IT OR NOT

Over the last two decades, the most promising route to new cancer drugs has been one pioneered by the discoverers of Gleevec, the Novartis drug that targets a form of leukemia, and Herceptin, Genentech's breast-cancer drug. In each case, scientists discovered a genetic change that turned a normal cell into a malignant one. Those findings allowed them to develop a molecule that blocks the cancer-producing process.

This approach led to an explosion of claims of other potential "druggable" targets. Amgen tried to replicate the new papers before launching its own drug-discovery projects.

Scientists at Bayer did not have much more success. In a 2011 paper titled, "Believe it or not," they analyzed in-house projects that built on "exciting published data" from basic science studies. "Often, key data could not be reproduced," wrote Khusru Asadullah, vice president and head of target discovery at Bayer HealthCare in Berlin, and colleagues.

Of 47 cancer projects at Bayer during 2011, less than one-quarter could reproduce previously reported findings, despite the efforts of three or four scientists working full time for up to a year. Bayer dropped the projects.

Bayer and Amgen found that the prestige of a journal was no guarantee a paper would be solid. "The scientific community assumes that the claims in a preclinical study can be taken at face value," Begley and Lee Ellis of MD Anderson Cancer Center wrote in Nature. It assumes, too, that "the main message of the paper can be relied on ... Unfortunately, this is not always the case."

When the Amgen replication team of about 100 scientists could not confirm reported results, they contacted the authors. Those who cooperated discussed what might account for the inability of Amgen to confirm the results. Some let Amgen borrow antibodies and other materials used in the original study or even repeat experiments under the original authors' direction.

Some authors required the Amgen scientists sign a confidentiality agreement barring them from disclosing data at odds with the original findings. "The world will never know" which 47 studies -- many of them highly cited -- are apparently wrong, Begley said.

The most common response by the challenged scientists was: "you didn't do it right." Indeed, cancer biology is fiendishly complex, noted Phil Sharp, a cancer biologist and Nobel laureate at the Massachusetts Institute of Technology.

Even in the most rigorous studies, the results might be reproducible only in very specific conditions, Sharp explained: "A cancer cell might respond one way in one set of conditions and another way in different conditions. I think a lot of the variability can come from that."

THE BEST STORY

Other scientists worry that something less innocuous explains the lack of reproducibility.

Part way through his project to reproduce promising studies, Begley met for breakfast at a cancer conference with the lead scientist of one of the problematic studies.

"We went through the paper line by line, figure by figure," said Begley. "I explained that we re-did their experiment 50 times and never got their result. He said they'd done it six times and got this result once, but put it in the paper because it made the best story. It's very disillusioning."

Such selective publication is just one reason the scientific literature is peppered with incorrect results.

For one thing, basic science studies are rarely "blinded" the way clinical trials are. That is, researchers know which cell line or mouse got a treatment or had cancer. That can be a problem when data are subject to interpretation, as a researcher who is intellectually invested in a theory is more likely to interpret ambiguous evidence in its favor.

The problem goes beyond cancer.

On Tuesday, a committee of the National Academy of Sciences heard testimony that the number of scientific papers that had to be retracted increased more than tenfold over the last decade; the number of journal articles published rose only 44 percent.

Ferric Fang of the University of Washington, speaking to the panel, said he blamed a hypercompetitive academic environment that fosters poor science and even fraud, as too many researchers compete for diminishing funding.

"The surest ticket to getting a grant or job is getting published in a high-profile journal," said Fang. "This is an unhealthy belief that can lead a scientist to engage in sensationalism and sometimes even dishonest behavior."

The academic reward system discourages efforts to ensure a finding was not a fluke. Nor is there an incentive to verify someone else's discovery. As recently as the late 1990s, most potential cancer-drug targets were backed by 100 to 200 publications. Now each may have fewer than half a dozen.

"If you can write it up and get it published you're not even thinking of reproducibility," said Ken Kaitin, director of the Tufts Center for the Study of Drug Development. "You make an observation and move on. There is no incentive to find out it was wrong."